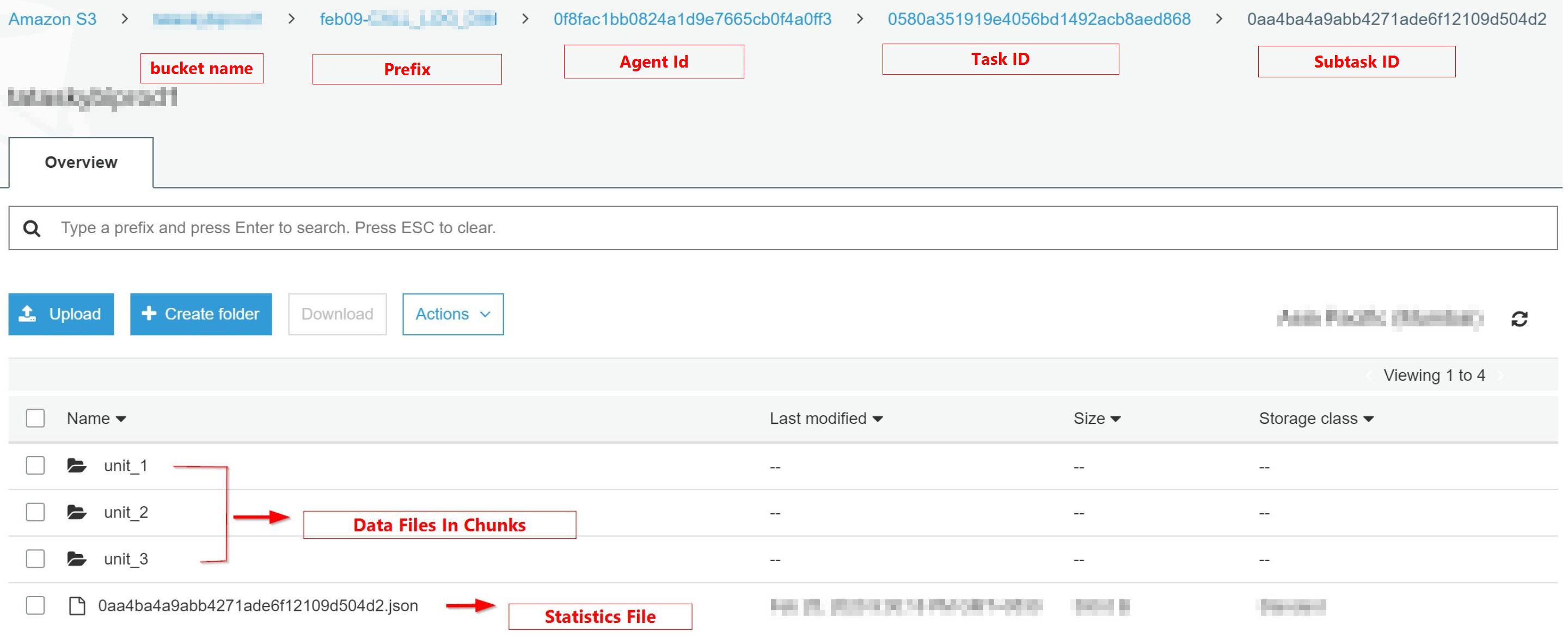

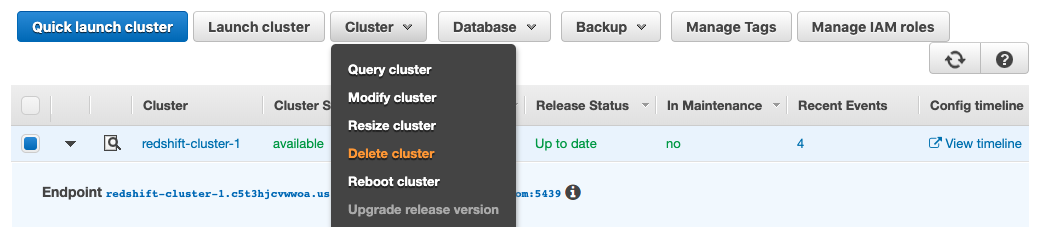

Use the MAXFILESIZE option to dictate file size, with 5 MB being the smallest, and 6.2 GB the largest. Options for outputting to S3 include file size, force one file, file name prefix, and more. I use the double quote escape syntax, with '''' for each quote: UNLOAD ('select * from shows where title=''''AGT''''') to 's3://redshift-output/shows.csv' The UNLOAD command takes a string for your query, so if you need quotes '' in it, select * from shows where title=‘AGT’ for example, you’ll need to escape the quotes. If your query is small, some of the output files will be empty. Depending on how many slices your cluster has, a file will be written for each slice. By default UNLOAD will write files in the format 0000_part_00, 0001_part_00, etc. For example, we’ll use CSV to export data in CSV format as an option.Įxample statement: UNLOAD ('select * from shows') to 's3://redshift-output/shows/'Īuthorization 'aws_iam_role=arn:aws:iam:::role/'Īfter running the UNLOAD statement in the query editor, you can find your results saved in S3 with the path s3://redshift-output/shows/. To do so we’l need to define an S3 location, an IAM role for permissions, and any options to include. We can use that select query with an UNLOAD command.  In this example we’ll have a single table, shows with a list of shows with show titles and descriptions: select * from shows To use S3 as a data source for Redshift and to export data, first write the query to export the data. For more information about creating IAM roles for Redshift see AWS docs here: Redshift and IAM Roles Exporting Data PrerequisitesĪccess to an AWS Redshift cluster, access to the query editor, and an IAM role with permissions to write to the S3 location. With the UNLOAD command, we can save files in CSV or JSON format directly to S3. I used s3cmd utility to download the data file and then import it into the service.With Redshift we can select data and send to data sources available to us in AWS Cloud. The final query looked like this: UNLOAD (' In my case I sorted the rows by ID column in descending order so that the name always goes before any numerical ID.  Its result has to be sorted by some column in the order that ensures that the column names come first. In addition, UNION doesn't guarantee the row order in the result. Because of that I had to type cast the all the values in the result to VARCHAR. UNION in Redshift fails if the queries return rows with different data types for the same columns. I added the column headers as the result of another query that was merged with the result of the first You can use any select statement in the UNLOAD command that Amazon Redshift supports, except for a select that uses a LIMIT clause in the outer select. The data files don't have column headers but sometimes they may be required. UNLOAD automatically encrypts data files using Amazon S3 server-side encryption (SSE-S3). If you need commas as the separators then use DELIMITER AS ',' option. 5 Answers Sorted by: 47 In order to send to a single file use parallel off unload ('select from venue') to 's3://mybucket/tickit/unload/venue' credentials 'awsaccesskeyid awssecretaccesskey' parallel off Also I recommend using Gzip, to make that file even smaller for download.The service supported pipes as the separators. That was fine for the purpose of my task. The data files use | (pipe) character as the column separator by default. If the data file already exists in the specified location then the UNLOAD command fails.ĪLLOWOVERWRITE option allows to overwrite the data and manifest files instead. Even though I was exporting a few million rows, I was not expecting the data to take more than 6.4 GB of space so I didn't use this option.  The command has the MANIFEST option to also create a JSON file with the list of data file names. It splits the exported data into multiple chunks of 6.2 GB. UNLOAD may create more than one data file. Some command options are really interesting but in this post I'll be talking only about the relevant ones. | PARALLEL Ī simple UNLOAD query would look like this:ĬREDENTIALS 'aws_access_key_id=KEY1 aws_secret_access_key=KEY2' Its syntax looks like this: UNLOAD ('select_statement')  This command accepts SQL query, S3 object path prefix and a few other options. In Redshift docs I found UNLOAD command that allows to unload the result of a query to one or multiple files on S3. That approach was too slow and I decided to look for an alternative. I decided to implement this in Ruby since that is the default language in the company.įirst, I tried to select the data in chunks of 100,000 rows using multiple SELECT queries and append each query result to a CSV file. I was expecting the SELECT query to return a few million rows. Recently I had to to create a scheduled task to export the result of a SELECT query against an Amazon Redshift table as CSV file to load it into a third-party business intelligence service.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed